Regulations.gov Continues to Improve, but Still Has Potential for Growth

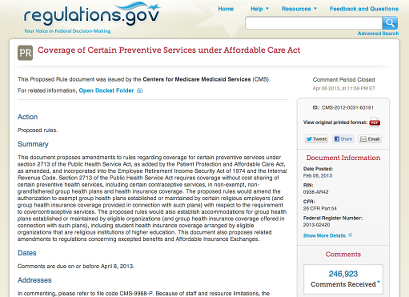

Recently, the EPA eRulemaking team released a new version of Regulations.gov, a website that tracks the various stages of the rulemaking processes of hundreds of federal agencies, and collects and publishes comments from the public about this rulemaking. We’ve written about Regulations.gov before, and continue to be impressed with the site’s progress in making the sometimes-daunting intricacies of federal regulations more approachable to members of the general public.

Recently, the EPA eRulemaking team released a new version of Regulations.gov, a website that tracks the various stages of the rulemaking processes of hundreds of federal agencies, and collects and publishes comments from the public about this rulemaking. We’ve written about Regulations.gov before, and continue to be impressed with the site’s progress in making the sometimes-daunting intricacies of federal regulations more approachable to members of the general public.

This release brings several new features that further this goal. Styling on many document pages has been significantly improved, making it much easier to read both rule and comment text. The presentation of metadata has also been made cleaner, so researchers can more easily find identifiers that help them connect a particular rule to related documents on other websites, such as FederalRegister.gov or RegInfo.gov. New panes have also been added to help users understand the public participation that has occurred so far in a given rulemaking, and to more easily recognize opportunities for further participation.

Of course, since last year’s release of the Regulations.gov API, Regulations.gov is more than just an informational website; it has also become a data provider that now facilitates a variety of third-party participation and analysis tools, as their Developers page now highlights. One such tool is Sunlight’s recently-released Docket Wrench, which uses Regulations.gov data to explore questions of corporate and public influence in the federal regulatory process. Docket Wrench evolved from two years’ worth of effort exploring the possibilities of analysis on federal regulatory comment data, and we believe the time we’ve spent building it has given us a unique perspective on the avenues of research this data makes available, as well as the opportunities for further growth and improvement in regulatory comment data going forward.

The team behind Regulations.gov deserves enormous credit for the progress they’ve made, but there remains much work to be done to give the public a complete, accessible and useful path into the federal regulatory process. The following aspects of regulatory comment data are at the top of our wishlist for improvement:

Completeness

Without a doubt, the single biggest impediment to comprehensive analysis of regulatory participation using Regulations.gov is its lack of coverage of all federal agencies. The White House has made admirable strides in encouraging agencies to centralize on a single web platform for housing regulatory comments, most notably in the form of Executive Order 13563. But this order only requires agencies to participate “to the extent feasible,” and exempts independent regulatory agencies from having to participate at all. It turns out the list of agencies that are considered independent is surprisingly long, and includes many agencies whose data we, as researchers of money-in-politics issues, would be very much interested in having, such as the FCC, the SEC, the FEC, and the Federal Reserve.

Without broader participation, research and watchdog organizations must choose between either excluding these non-participating agencies from their work or writing additional interface software to interact with the bespoke comment management systems of these agencies, most of which don’t have API access as Regulations.gov does. Fortunately, precedent exists for the voluntary participation of independent regulatory agencies such as the Consumer Product Safety Commission in the use of Regulations.gov for comment hosting, and we hope this participation can serve as a model for future agency engagement.

Metadata

Analysis using Regulations.gov data is often tricky because of various concerns about metadata surrounding both federal register documents and comments. For us, the most prominent of those include:

-

Lack of standard fields: likely due to the history of Regulations.gov and its gradual assumption of the role of government-wide regulatory comment clearing house, and the need to accommodate various pre-existing internal agency processes, there’s a lot of variability in the metadata that’s attached to content from agency to agency. We’ve found that there are somewhere on the order of forty possible metadata fields agencies can use to describe documents on regulations.gov, and almost none of them are mandatory (the only required ones are posted date, title, and the documents themselves; it’s also permissible for the title to be defined but uninformative, like “Public Comment”). The important ones that are missing—from the perspective of trying to assess influence on government, our primary area of research—relate to whom the comment came from: submitter name and submitter organization. Without these, it’s extremely difficult to do any kind of aggregate analysis about the source of comments without having someone read the comments and manually classify who they’re from. Complicating matters, our sense is that this isn’t just a data quality problem (that is to say, it’s not just that agencies have this data but don’t offer it in structured form); rather, it appears that many agencies don’t even collect this information when they accept comment submissions, nor do they seemed to be required to do so at present.

-

Lack of corporate identifiers: expanding on the above, some other online sources of data about corporate engagement in government (such as SOPRweb, the Senate’s lobbying disclosure system), assign unique IDs to corporate actors to make them easier to track across engagements. It would be incredibly useful if regulatory commenters had unique IDs that could be used to track them across comments. Even for comments that include separate fields for submitters, we have name resolution problems typical of our research that make it difficult to definitively identify regulatory commenters. A concrete example from some recent work by Sunlight’s Reporting Group revolved around the difficulty of grouping “U.S. Chamber of Commerce,” “Chamber of Commerce,” and “United States Chamber of Commerce” together by automated technical means, and separating them from other distinct institutions that also have “Chamber of Commerce” in their names. Corporate identifiers involve a complex set of policy issues, but without them, it’s difficult to be especially confident in the results of research that attempts to quantify submitter behavior.

-

Lack of standard regulatory identifiers: the federal government posts rules to around a half-dozen different websites (depending on how one counts), and it’s surprisingly difficult to tie a given rule as it’s displayed on one site to the exact same rule as it’s displayed on a different one. In our particular case, this means it’s tricky to integrate our Docket Wrench tool (which gets its data from Regulations.gov) with our Scout notification service (which gets its regulatory data from FederalRegister.gov), and also non-trivial to add comment data scraped from the websites of non-Regulations.gov entities and match them up to those agencies’ rules as they appear on Regulations.gov. Among the different identifiers that we see for the same rule on various websites are Regulations.gov’s own ID number, the Federal Register number assigned by the Office of the Federal Register, the Federal Register print edition volume and page number, the Regulatory Identification Number (RIN) assigned by the Office of Information and Regulatory Affairs (OIRA), and various agency-specific docket numbers and codes. With the exception of the first, none of these are guaranteed to be attached to any particular rulemaking document on Regulations.gov.

Bulk Data

No source of regulatory comment information (either from Regulations.gov, other agency websites, or elsewhere) is available in bulk. APIs are useful for querying subsets of a database, but using them to perform aggregate analysis can be arduous, since collecting all of the data from Regulations.gov involves literally millions of separate API requests. The process took us over a month to complete. Further, Regulations.gov doesn’t make the actual raw text of most document attachments available at all (via the API or otherwise), requiring researchers interested in analyzing this text to extract it for themselves from source documents in a variety of formats (Word, PDF, etc.).

While the lack of bulk data is a limitation that merely complicates our data collection efforts somewhat, it can be much more taxing for other researchers with fewer technical resources, putting aggregate analysis of regulatory comment data outside the hands of many people who are interested in the field. Sunlight has written before about the tendency among government agencies to prefer the publication of APIs to the publication of bulk data, and this definitely seems like a circumstance where there are valid use cases for both.

Conclusion

Despite the current limitations, we continue to find new opportunities to make use of this rich dataset, and to be pleased with the work of the team that publishes it. We hope to continue to engage with the eRulemaking team in the coming months to fully realize the analytical potential this data offers.