OpenGov Voices: 3 simple ways cities can improve access to online information

Disclaimer: The opinions expressed by the guest blogger and those providing comments are theirs alone and do not reflect the opinions of the Sunlight Foundation or any employee  thereof. Sunlight Foundation is not responsible for the accuracy of any of the information within the guest blog.

thereof. Sunlight Foundation is not responsible for the accuracy of any of the information within the guest blog.

Matt MacDonald is the co-founder and president at NearbyFYI. NearbyFYI collects city government data and documents, helping make local government information accessible and understood. He can be reached at matt@nearbyfyi.com. Matt is also one of the winners of Sunlight Foundation’s OpenGov Grants.

At NearbyFYI we review online information and documents from hundreds of city and town websites. Our CityCrawler service has found and extracted text from over 100,000 documents for the more than 170 Vermont cities and towns that we track. We’re adding new documents and municipal websites all the time, and we wanted to share a few tips that make it easier for citizens to find meeting minutes, permit forms and documents online. The information below is written for a non-technical audience but some of the changes might require assistance from your webmaster, IT department or website vendor.

Create a unique web page for each document or form

Each city or town meeting that occurs should have its own unique web page for agenda items, meeting minutes and other documents. We often see cities and towns creating a single, very large web page that contains an entire year of meeting minutes. This may be convenient for the person posting the meeting minutes online but presents a number of challenges for the citizen who is trying to find a specific meeting agenda or the minutes from that meeting.

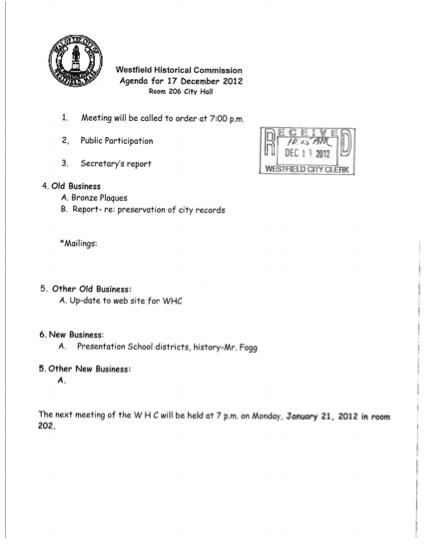

Here is an example of meeting minutes that are in a single page that requires the citizen to scroll and scroll to find what they are looking for. This long archived page structure also presents challenges to web crawlers and tools that look to create structured information from the text. Proctor, VT provides a good example for what we look for in a unique meeting minutes document. We like that this document can answer the following questions:

-

Which town created the document? (Proctor)

-

What type of document is this? (Meeting Minutes)

-

Which legislative body is responsible for the document (Selectboard)

-

When was the meeting? (November 27, 2012 – it’s better to use a full date format like this)

-

Which board members attended the meeting? (Eric, Lloyd, Vincent, Bruce, William)

The only thing that could improve the access to this document is if it was saved as a plain text file rather than a PDF file. Creating a single web page or document for each meeting means that citizens don’t have to scan very large documents to find what they are looking for.

Save PDFs as text not images

After running our CityCrawler for several months it’s clear that cities and towns love the PDF file format to share information online. While PDF files can be a quick way to post information online, cities are often publishing documents that are scanned images which is no better than taking a photo of the document and posting it on Instagram.

The challenge here is that search engines must use OCR (Optical Character Recognition) software to try and extract the text from the image. Anything that makes it harder for search engines to index your documents means that fewer citizens are going to find your published information. At NearbyFYI we often see documents that could easily be saved as a text PDF but are scanned as images.

Here is how this document could easily be converted to a format that search engines can use.

Steps to convert your PDF to text

-

Scan the meeting minutes document and save it as a PDF.

-

Open the scanned document in Adobe Acrobat.

-

Select “File> Export> Text> Text Plain.”

-

Name the document and click “Save.”

-

Open the saved file and review for conversion errors.

-

Save the corrected document and post to your website.

Allow web crawlers in Robots.txt

Most information online is found via search tools like Google or Bing. Google uses what is called a web crawler or web page indexer to review your website documents so that they can add your content to their search index. This is a good thing, you want the search companies to find your content as it’s likely the way most citizens are going to look for information about your community.

Robots.txt files contain a simple set of rules that web crawlers follow. Some websites are setup to allow crawlers others aren’t. This is an example of a robots.txt file from a city who’s online information won’t be found with a Google Search:

User-agent: * Disallow: /

What this means is that when a citizen searches Google for “how to get a zoning permit in pownal, vt ” they won’t find this page: http://www.pownalvt.org/planning-commission/town-plan/zoning-rules/zoning-permit-application/. Ensuring that web crawlers can access the documents you post on your website is likely the simplest thing that you can do to improve citizen access.

Next steps

We’re excited about the OpenGov Grant from the Sunlight Foundation and how it will help advance our municipal data collection efforts. We’re using the grant funds to pay for updated infrastructure and tooling that allows us to take our existing municipal data collection work in Vermont to the national level. Our friends at Vermont Public Radio have shown how valuable the information published by our cities and towns is to becoming more informed civically and we want to make that available to everyone. If you are interested in learning more about how your city can improve access to meeting minutes, building permits and other public documents we’d love to hear from you at info@nearbyfyi.com.

Interested in writing a guest blog for Sunlight? Email us at guestblog@sunlightfoundation.com