Is there a good way to evaluate the impact of civic tech?

As open government and civic tech movements seek to understand how our work affects social inequalities, organizations and their leaders will need more nuanced and explicit ways to communicate how government reforms that use tech and data can generate impact.

Currently, civic tech and open government organizations default to measuring quantitative outputs like “number of open datasets shared” or “number of new daily active users” to demonstrate their impact. But at the Sunlight Foundation and among our peers, these metrics don’t capture the broad, cultural impacts we seek to have on governments and their constituents.

Demonstrating impact is difficult for any organization, and our field is no different. However, the legitimacy of democratic systems is being threatened in the U.S. and around the world, and civic tech and open government groups are facing the same questions from funders and practitioners alike: How does our work actually change society? How can data and technology play a role in building more powerful communities? In short, how does this work really matter?

Co-creating a simple evaluation tool

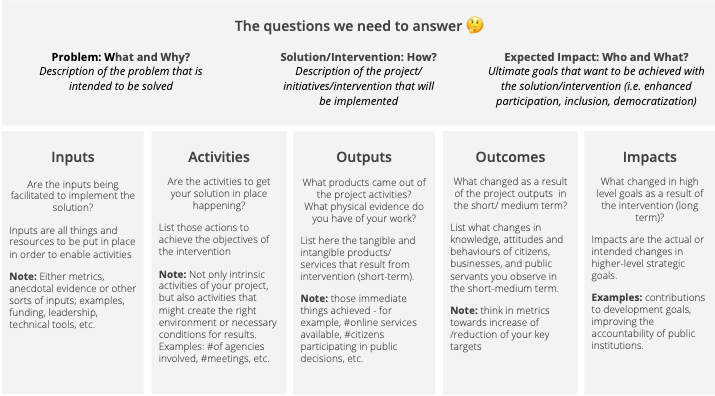

At the Open Government Partnership Summit in Ottawa last spring, Sunlight, BetaNYC, DATA Uruguay, ILDA, and the Digital Minister of Taiwan came together to explore the issue of impact assessment. Even occupying differing roles within our countries’ civic tech and data ecosystems, we wanted to promote shared, holistic evaluations of cultural and social progress that might emerge from open government and civic tech reforms. Our goal was to apply a simple but powerful canvas tool to help our peers plan to measure immediate and long-term results of their projects. In groups, participants used a specific project they were working on as an example and discussed whether the tool would help them meaningfully evaluate and reflect on their own work.

At Sunlight, UChicago fellow Kyra Sturgill tested the tool by attempting to frame how community engagement around open data can affect change in cities and communities. In using it, she noted that civic tech and open data advocates in particular need tools like this to think about how their work makes a difference. Those working to improve governments through data and tech need to think beyond technology and data use to understand how their actions might connect to changing and evolving societies.

Today, we’re sharing the tool itself with the hope that civic tech and open government practitioners will examine it, apply it, and share how it helped them evaluate their work.

Please review the tool, see if it fits your needs, customize it for your local context and share your feedback by tweeting at @SunlightCities or by emailing opencities@sunlightfoundation.com.

Inherent challenges to measurement

Among participants of the co-design session at OGP Ottawa, there were a few consistent concerns about the tool and its ability to help measure impact. These were the most common challenges we heard among participants.

“We won’t know the real impact of our work for a long time.”

As with any social change, efforts to advocate, build, or institutionalize infrastructural reforms to how governments and communities use technology to improve democracy will take a long time to manifest. However, “measurability” can also stop self-evaluators from setting forth any goals at all about how they might shape the future. It’s possible that remaining flexible with regards to the “Impacts” column can help organizations be more aspirational about what they’ll achieve without worrying about the feasibility of short-term measurement.

“Our understanding of a project’s impact changes over time.”

While this is a challenge for reporting impacts to funders, it may not be a challenge for organizations self-evaluating. We added a section at the top of the tool to try to capture “expected impacts” and compare them against actual impacts by the end of the project. Self-evaluation should be an on-going process where those using tools like this canvas can update their assumptions regularly over time. We’re open to feedback about how this tool could better help to track changing assumptions about expected impact.

“Collecting data on these outcomes is not in our mandate.”

Most participants noted that they don’t have the time or additional capacity to collect the data that would accurately describe their work’s outcomes. This tension can lead organizations to collect data on outcomes that perpetuate an unclear understanding of what is already often intangible work. It was also difficult for participants to distinguish between data to measure outcomes and data to measure impacts. We would like to see how civic tech organizations might track their own outcomes, and welcome any suggestions on how to improve the tool to better support innovators in the open government and civic technology space.

Once you’ve reviewed the tool, post it online, or share it with us at opencities@sunlightfoundation.com. We look forward to continuing this dialogue and will continue to stay open in how we report on our work. I offer a great, great thanks to my collaborators and fellow thought leaders Noel Hidalgo at BetaNYC, Daniel Carranza at DATA Uruguay, Carla Bonina at ILDA, and Audrey Tang the Digital Minister of Taiwan.